Normally, I’m ideologically opposed to these kinds of posts. “Best practices” can lead to checkbox thinking that constrains strategy down to an uncreative list of tasks. We don’t want to limit our creativity and accountability by turning ourselves into SEO box checkers, but we don’t want to throw all best practices into the recycling bin.

Best practices are a starting point for expertise, not a replacement for it. Following best practices is what we should do when we’re starting from zero on a project. They are training wheels of expertise.

I also don’t like these posts because they are usually the same dozen hyped-up topics that don’t apply broadly. 2017, 2018, and 2019 were all the years that voice search was to become the next big thing, but very few brands actually need to create Alexa Skills.

SEO practices shouldn’t change very much year-to-year anyway. Google still has a monopoly on web search, and things like E-A-T are a new name for what we’ve been doing all along.

Instead, this post is about how to upgrade our SEO practice in the year ahead by incorporating recent and upcoming changes in Google SEO. We want to make our SEO practice the best, not have the best boxes to check.

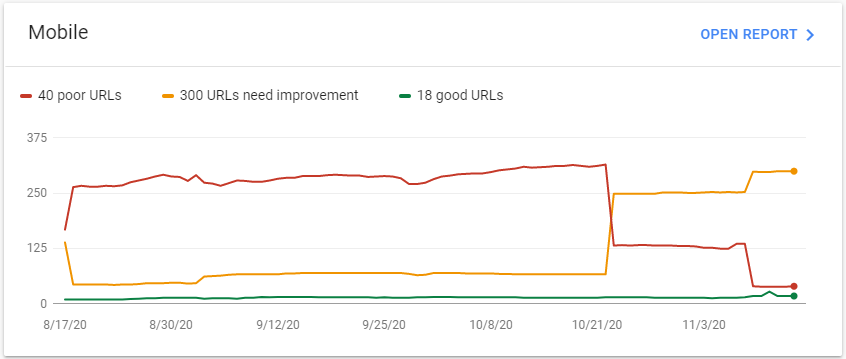

1. It’s Time to Think About Mobile-Only Indexing

We’ve heard the directive “think mobile first” from Google since at least 2011, but I’m certain most of us still review websites using desktop resolutions. This will have to change!

Google recently announced that starting in March 2021, mobile-first indexing will only include the mobile version of the web. Google will ignore the desktop views of our content for indexing and ranking. “M-dot” mobile sites will become the primary, not the alternative, possibly causing issues for websites that haven’t adopted responsive design.

I think this shift actually simplifies things because we no longer have to worry about the weighting of mobile and desktop content features. How mobile content renders is the only concern now. Whatever mobile Googlebot sees when they render the page is what counts.

Best Practice: When designing new websites, making mockups, auditing websites, writing H1 tags, evaluating main navigation links, and so on, we have to be in the mobile-only headspace. It’s going to be annoying at first to simulate a mobile device with our browser all the time, but we’ll have to get used to it.

2. Clearly and Directly Answer the User’s Query

The idea of “relevance” has evolved a lot over the years. Relevance in keyword search began by evaluating how well a document contained the same words as the query. This basic measure of relevance is what you might find in a library’s keyword search. Now, Google has advanced to evaluating how well passages of text in our content might answer a question, and they’re calling it “passage-based Indexing.”

In addition to the way Google already indexes our content, Google will be storing our passages of text in their index. When pages are scored for ranking, these passages are going to be incorporated into the relevance scores. This might not seem like a big deal, but Google says they expect about 7% of all queries to be affected by the change. That’s quite a lot of queries.

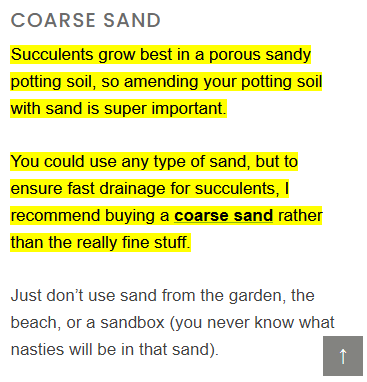

The technology behind how passage-based indexing will be used in ranking is probably based on BERT, and closely related to how Google identifies candidate passages for featured snippets. In other words, if our pages contain passages of text that are very “answery” for a given query, they are more likely to rank higher for that query.

For the search marketer, this means we have to clearly and directly answer the user’s question in our content. This is something we should have been doing the entire time. Google is now much better at detecting when we are.

As an added bonus to directly answering queries, the passages of text that help us rank with passage-based Indexing might also be used for featured snippets. If we can get into the top 10 more often with this change, we can also get the position with the highest click-through rate.

Best Practice: Clearly and directly answer the user’s query in your content. Think about what text you would use to optimize for featured snippets and incorporate that into your content briefs and content audits.

3. Render Content Quickly and Smoothly

Rendering performance is not just a ranking factor; it’s an everything factor. Every channel and each of their conversion metrics are affected by how well pages load for users. We have studies to back this up.

There are a lot of ways to measure page performance, and there are at least a dozen metrics for page speed. For a long time, organic search marketers didn’t know which ones mattered most.

Luckily, Google gave us some to optimize for in their Lighthouse reports. In 2020 the metrics were updated and are now called Core Web Vitals, and they gave us the date for when they will be incorporated into ranking: May 2021.

Google’s rollout of Core Web Vitals has been great for marketers and is hopefully an example of how updates will be launched in the future. Google has been extremely helpful and transparent with how the metrics will be implemented by telling us when the update will happen, offering several tools for measuring them in Google Search Console and Lighthouse reports, and making the data available for Core Web Vitals dashboards in Google Data Studio.

Best Practice: Do a site performance audit and get your Core Web Vitals worked out before May 2021. Websites with poor scores could get demoted, and those with high scores could get a badge in their search result snippets. We have a whole guide for optimizing site speed that will help you get started.

4. Do Digital PR to Get Better Backlinks

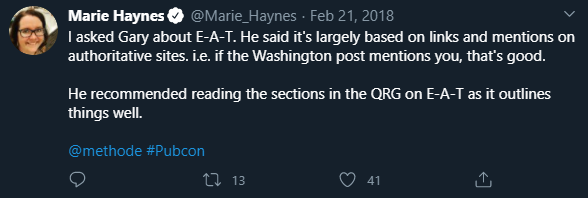

Articles about E-A-T often talk about on-page strategies we can use to rank higher, like having author bio boxes, cleaning up spammy user comments, and citing sources. These things are necessary to do, but I’ve seen websites struck down by recent core algorithm updates that were already doing most of those on-page best practices.

After auditing websites negatively impacted by core algorithm updates in 2019 and 2020, a pattern emerged that’s consistent with things Googlers have said about E-A-T and the patents they’ve put out. In almost every case I looked at, the biggest gap between the websites demoted by an update and their competitors that survived has been the quality of their links.

The survivors tended to have links from some of the most trustworthy places on the web: news websites and industry-relevant websites. This leads me to think that links are a big part of recent core algorithm updates and are an overlooked part of E-A-T.

This speculation isn’t so wild. Search quality updates are about finding better ways to separate the ham from the spam using signals that indicate quality. A link from a news organization is a particularly rare signal of trust because it implies that a human editor reviewed the link and decided that it’s safe to send their users to the linked site.

In 2015, Google received a patent for a version of PageRank that ranked pages based on their link distance away from seed websites. If these seed websites included news organizations, industry blogs, and magazines that are used in a system in the same spirit as this patent, that could explain why I’ve been seeing sites with these backlinks perform well after an update.

If Google is using a system of link-based trust to favor websites with rare and high-quality backlinks, the question becomes how to obtain them. Buying links from private blog networks, or whatever the spam du jour is, won’t get us there. What we need to do is reach out to publishers, editors, and journalists with a pitch for a story; public relations, in other words.

Best Practice: Add digital PR and content promotion to your off-page SEO strategy. Links are always going to be used for determining who is worthy of ranking at the top, and search engines are only going to get more selective over time.

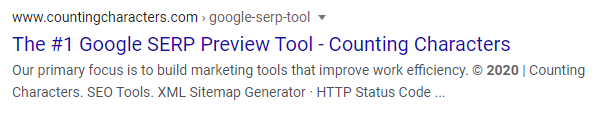

5. Take Control Over Snippet Descriptions

Google isn’t using our meta description tags in snippets very often. Our tags are ignored up to 71% of the time for rankings on the first page!

Google seems to think they are really good at picking excerpts of text to use instead of our hand-crafted meta descriptions. They may be for queries we weren’t targeting with our tags, but I have seen them pick some bizarre text to use instead.

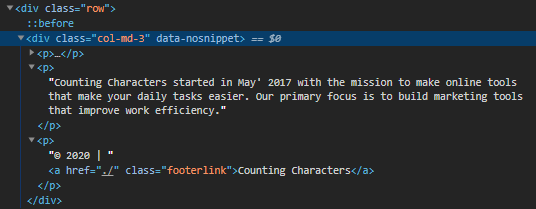

Luckily, Google gave us a tool to help us compensate for the hubris built into their algorithms. Using a special attribute called data-nosnippet, we can tell Google to ignore any text in the tag and its children for the purposes of search result snippets.

Let’s suppose Google thinks the text in our footer is relevant for a mostly different query:

We could help nudge Google to pick a different snippet by making our footer text ineligible for snippets. We just need to find the right tag:

Best Practice: Use data-nosnippet carefully and verify its implementation. We can block a lot of irrelevant text from appearing in snippets by picking a tag that contains a lot of text, but we could also make a mistake and block the whole main content of the page.

If there isn’t a good tag to use for blocking irrelevant snippet text already on the page, wrap the target text with unstyled <span> tags and place data-nosnippet in it.

The Core of SEO Is Still the Same

No matter how many new search features and tools Google launches, the goal of web search remains the same: provide expertly produced content to answer the user’s query. This year, we’re getting some changes that make that easier to do with better site performance analytics and natural language processing. It’s also getting harder because mobile isn’t the easiest platform to work with, and the goalposts for links and site quality are moving a little further out.

Hey Evan,

I learnt a lot from this article. I have been noticing google about picking its own meta description from the content, didn’t know, its something we can control.

Data-nosnippet directive is gonna we very useful.

Hey Evan,

Thanks for sharing such great key points here on SEO.

I really following these methods for my clients’ projects, and it is really helpful.

I hope you will keep updating us via this blog in future too.