If you work in digital marketing, you are likely familiar with A/B testing: the process of running an experiment to determine the best version of a variable by isolating users into test groups and analyzing performance between the two.

A/B Testing on Social Media

Social media offers unique opportunities for A/B testing. Users are tied to accounts, not cookies, which means audience demographics are more accurate, and the likelihood of experiment replication is much higher. Additionally, most social media platforms give advertisers the ability to easily target and exclude users from segments, decreasing the possibility of overlap between test groups.

As with any channel where a business is investing ad dollars, A/B testing is an important piece of campaign optimization. Analyzing the performance of audiences, ad creative, and landing page conversion all contribute to a healthy social media strategy. A/B testing can also help advertisers determine success across channels, which allows for better budget allocation between platforms.

The concepts outlined here apply to A/B testing on any social media platform, but the emphasis of this post is on one platform specifically: Facebook.

Best Practices for Facebook A/B Testing

The A/B testing strategy for each business is unique, as each company has different goals, resources, and products. That being said, there are several factors we consider to be best practices across the board.

Goal Setting

Goal setting in a social media A/B test may look different than in other channels but is still just as important. Once a social media campaign is live, feedback from users is immediate. It’s easy to get caught up in the sentiment of user response without stepping back and seeing the big picture. While this is an important part of evaluating success, setting goals beforehand (tied to specific KPIs) will ensure your team can accurately evaluate results.

Isolating Variables

As with any science experiment, it is important to manipulate only the variable you want to test, and control all other variables. This ensures that your experiment has accurate results not influenced by outside factors. This is just as important for A/B testing on social media. The variables in this instance can be any aspect of the campaign: from location to audience to creative asset. We always recommend that our clients avoid combining A/B tests, to ensure that one test doesn’t skew the results of another.

A/B Testing vs. Facebook Split Testing

While A/B testing is possible across Facebook, LinkedIn, and Twitter, it should not be confused with Split Testing, Facebook’s dedicated A/B testing functionality. The difference is how the campaign is structured and results measured.

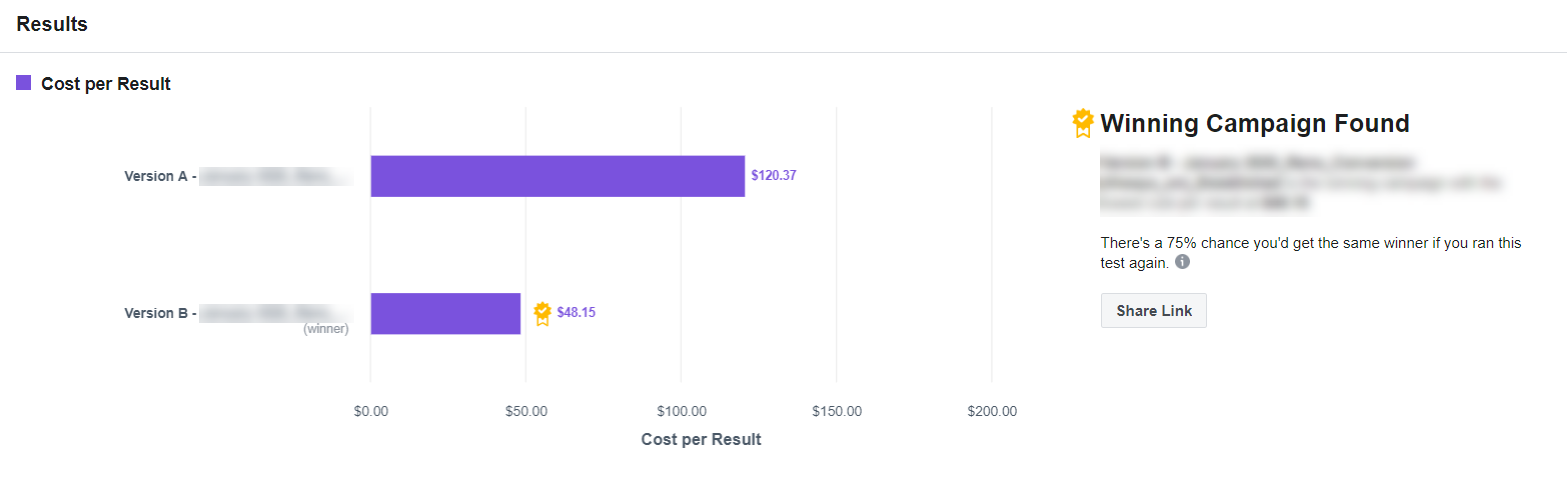

For example, in a traditional A/B test, a standard campaign is created with one audience, and two creative assets are added. Based on predetermined KPIs, an advertiser compares performance and declares the winning creative. In a Split Test, Facebook “divides budget to equally and randomly split exposure between each version of your creative.” Facebook will then declare a winner on a cost-per-result basis:

Additionally, Facebook will provide a confidence interval, specifying the likelihood that you would see the same results if the test was replicated.

Split Testing is a great way to isolate variables and reduce effort on the part of the advertiser, as Facebook determines the winning variation. But Split Testing is not right for all businesses, especially those that require significant flexibility. Once a test has been published, it cannot be edited until the test is finished (it can only be turned off).

Measuring Results

The Breakdown Effect

If your business is executing an A/B test on Facebook but not running a structured Split Test, it is important to take into account the Breakdown Effect. Facebook states:

“A common point of confusion when evaluating Facebook ads reporting between ad sets, placements, and ads is that our system appears to shift impressions into underperforming ad sets, placements, or ads. In reality, the system is designed to maximize the number of results for your campaign dependent on what ad set optimization you choose. This understandable misinterpretation is called ‘The Breakdown Effect.'”

Essentially, it will appear in Ads Manager like the algorithm incorrectly optimized your campaigns.

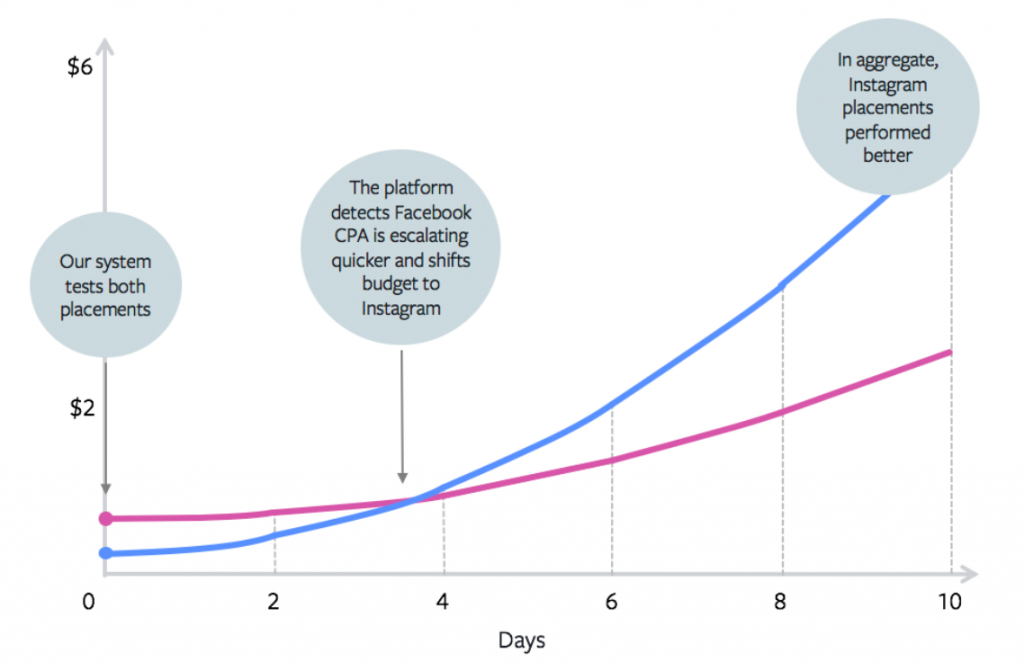

For example, say you are running a campaign with all placements, and you view performance by placement. It may look like the Instagram Feed drove a lower CPA than other placements, but Facebook allocated less ad budget towards this placement. The first instinct would be to manually manipulate the campaign so that Instagram is allocated a higher percentage of the budget. This would likely result in an overall decrease in performance. As put by Facebook, “The system recognized that although one placement was driving the most efficient results initially, it predicted the cost was going to increase throughout the duration of the campaign.”

The key here is to look at aggregate performance by strategy, not a breakdown of each individual element.

What Does Success Look Like?

As I mentioned, a successful A/B test on social media looks similar to a scientific experiment elsewhere: it can be replicated, it provides actionable insights, and results can be directly tied to specific variables in the campaign. A successful A/B testing strategy should include next steps and iterations. Because ad creative on social media has a short lifespan, there will always be another test to perform. It is important to isolate key themes within your tests to move the program forward.

Primary and Secondary KPIs

Here are a few examples of what we consider to be primary and secondary KPIs. Your primary KPIs should be measurable period over period and tie directly to your business objectives. They should not be subjective.

Primary:

- Sales (Last click)

- Leads

- Landing page views

- Click-through rate

Secondary:

- User sentiment

- Referral traffic

- Sales (impression attribution)

A/B Test Suggestions for Your Business

Our team has done extensive A/B testing for clients in a variety of verticals. Based on our experience, here are some suggestions for some market-specific A/B tests to help get you started.

eCommerce/Business to Consumer

With by far the most options, eCommerce and retail companies have the advantage of tying results directly to sales. With this ability comes the desire to A/B test every aspect of the current social program. We recommend setting a specific testing schedule, with flexibility worked into that schedule if a pivot is necessary. Sticking to a program ensures that tests build on each other strategically. It also reduces the likelihood that external groups can drive it off course.

Business to Business

With a longer sales cycle and a smaller list of potential clients, we recommend starting A/B testing by audience. If lookalike audiences don’t have enough seed data and retargeting audiences are too small, using A/B testing can help your business determine what your audience looks like on social media. From here, we recommend moving onto messaging and creative testing via lead generation.

Professional Services

While it may be difficult to measure which type of creative drives the most sales, it is easy to determine which type of messaging your customers respond well to. For example, for one of our clients that sells a high-investment product, we segmented their customer lists into groups by demographic. We then A/B tested messaging highlighting specific value propositions. The differences in responses amongst the groups informed our messaging strategy across all channels moving forward.

In Other Words

A/B testing is a valuable piece of your paid social strategy. It should be used to inform not only your social investment, but that of your other channels as well. The ability to test specific messaging to a specific audience group, and receive immediate anecdotal feedback cannot be replicated elsewhere. By taking into account the recommendations above, any business should be able to execute an A/B test on social media with clear, actionable insights.