Updated on April 20th, 2022, to include current data and insights.

It’s common knowledge that site speed impacts the user experience on your website. But how, exactly, and by how much? Does a slower website mean lost dollars?

Absolutely. Multiple studies, including Portent internal research, have concluded that site speed impacts conversion rate and sales.

We did some analysis based on e-commerce and conversion data from 20 websites and over 27,000 landing pages worth of data.

Research Method

We looked at just over 100 million page views across 20 B2B and B2C sites for conversion data. The critical statistics:

- We took a 30-day snapshot.

- Our page load speed sample size across all websites was gathered from 5.6M sessions over those 30 days.

- Six of the sites were B2C e-commerce. The other 14 were B2B lead generation.

- The sites ranged from major national travel brands to small, niche software-as-a-service companies.

What We Found for B2B Websites

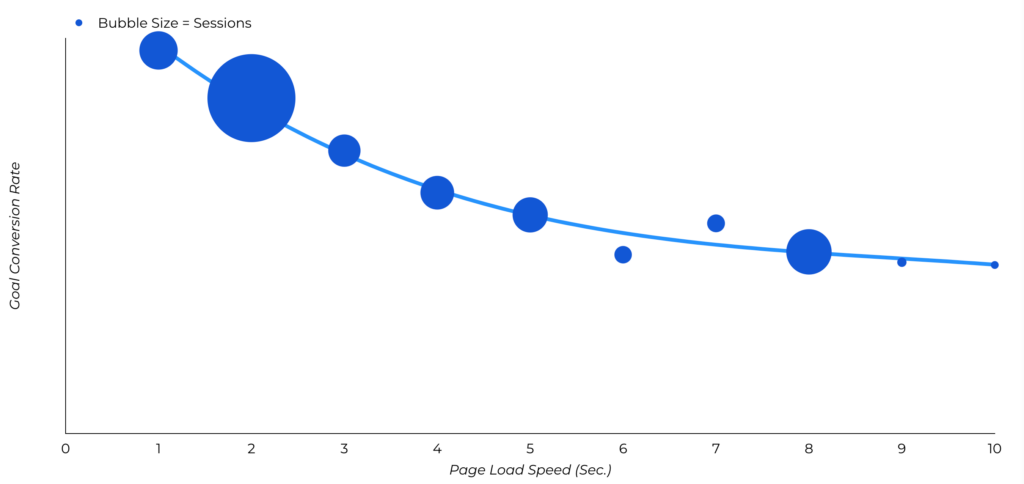

These are our findings when comparing site speed versus conversion rates for lead generation websites:

- Generally, site speed improvement has been flat. 82% of the pages we measured loaded in 5 seconds or less. This was the same as when we ran the study in 2019, before the pandemic.

- The difference in conversion rate between blazing fast sites and modestly quick sites is astonishing. A site that loads in 1 second has a conversion rate 3x higher than a site that loads in 5 seconds.

- The difference in conversion rate between blazing fast sites and slow sites is even more pronounced. A site that loads in 1 second has a conversion rate 5x higher than a site that loads in 10 seconds.

What We Found for B2C E-Commerce Websites

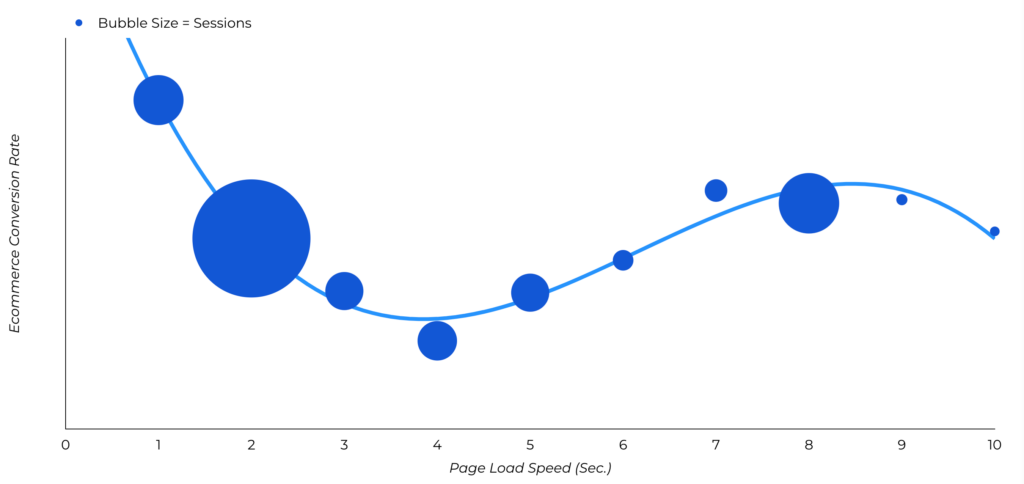

These are our findings when comparing site speed versus e-commerce conversion rates for B2C websites:

- Site speed has improved slightly. 86% of the pages we measured loaded in 5 seconds or less. That was only true 81% of the time when we ran the study in 2019, before the pandemic.

- The difference in e-commerce conversion rate between blazing fast sites and modestly quick sites is sizable. A site that loads in 1 second has an e-commerce conversion rate 2.5x higher than a site that loads in 5 seconds.

- The difference in e-commerce conversion rate between blazing fast sites and slow sites is oddly not as high. A site that loads in 1 second has an e-commerce conversion rate 1.5x higher than a site that loads in 10 seconds. (This could be due to the smaller sample size of pages we had to work within the 10-second range this time around.)

To Improve Goal Conversions on Your Website: Aim for a 1-4 Second Load Time

Goal conversions on websites are generally achieved at a higher rate than e-commerce conversions. A simple exchange of contact information has a much lower barrier to complete than e-commerce transactions.

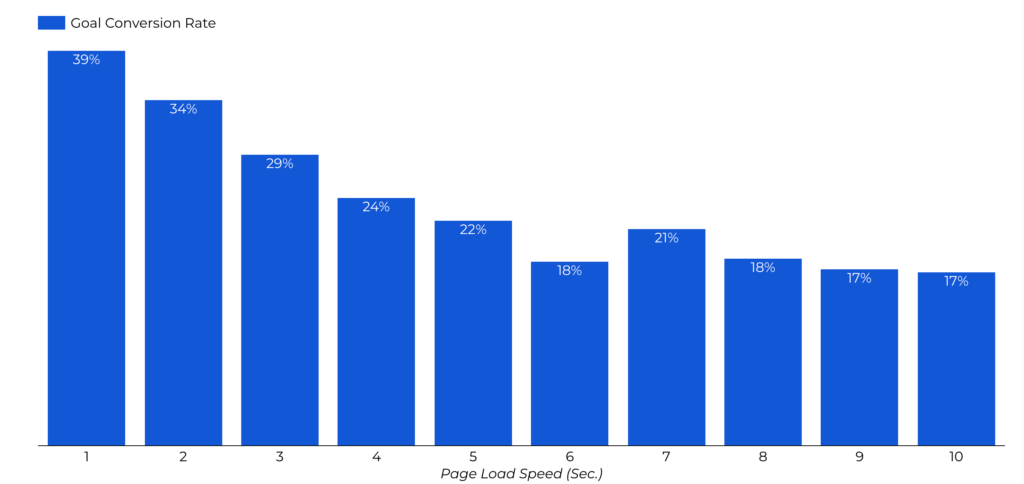

However, while overall goal conversion rates are higher than transaction rates, the dropoff of conversions is much steeper as sites get slower. When pages load in 1 second, the average conversion rate is almost 40%. At a 2-second load time, the conversion rate already drops to 34%. At 3 seconds, the conversion rate begins to level off at 29% and reaches its lowest at a 6-second load time.

At anything above 5-second page load time, you’re talking about roughly half the conversion rate of a fast website.

To Improve Transaction Conversions: Aim for a 1-2 Second Load Time

1 to 2 seconds sounds astonishingly fast. But when website users are accustomed to fast load time across the web, slower sites are penalized when visitors abandon their cart in favor of a faster website to make a transaction.

The highest e-commerce conversion rates occur between 1 and 2 seconds, spanning an average of 3.05% e-commerce conversion rate at 1 second, down to a 0.67% e-commerce conversion rate at a 4-second load time. After that, conversion rates are all below 2%.

In fact, your e-commerce conversion rate decreases by an average of 0.3% for every additional second it takes for your website to load.

Quick math to further demonstrate this point:

If 1,000 people visit your website to buy a $50 product, this could illustrate the difference in your potential earnings:

- A 1 second page load time at a 3.05% conversion rate results in $1,525

- A 2 second page load time at a 1.68% conversion rate results in $840

- A 3 second page load time at a 1.12% conversion rate results in $560

- A 4 second page load time at a 2.93% conversion rate results in $335

In the span of ~4 seconds, potential sales have dropped by just over $1,190.

Multiply that across more visitors to the website looking at potentially higher-priced products and services, and that results in significant gaps in potential revenue.

Some Pages Matter More Than Others

Hardly news, but faster checkout, login, and home pages matter most. After that, load speed for product category pages has the most impact on sales. All of these pages have high consumer-intent traffic. Make them fast.

How Can I Improve My Site Speed? Factors to Consider

Page ‘weight’ (that’s the total kilobytes transferred, images and all) is no longer the biggest factor in site load time.

The reason? Many sites have streamlined their code by “minifying” their code and using GZIP compression. So the bigger factor, in many cases, is the server and page configuration.

If you want to speed up your site, look at the following elements.

JavaScript Timing

Where possible, put JavaScript src ‘.js’ includes at the very end of your page, defer them, or load them asynchronously. For example, if you use Google Analytics or jQuery, you’re including these scripts in your page from external files. These are ‘blocking’ calls, and your page may not appear to the user until these scripts fully load. If something goes wrong or the script just takes a few seconds, the perceived delay can kill conversion rates.

Use deferred execution if you need to fire the javascript after the entire page loads.

Use asynchronous execution if you don’t care when the script fires.

Resident Portent SEO expert Evan Hall also recommends configuring non-essential Google Tag Manager tags to fire on “DOM ready” or “Window Loaded” to improve page speed. If a GTM tag isn’t critical to rendering a page, see if you can fire it a little later.

ETags and Expires Headers

Other page speed factors are ETags and expires headers. These may sound scary but expires headers, in particular, are easy to set. Your IT team or web host will know what to do.

These two settings help reduce the number of requests a browser makes to the server. They tell visiting web browsers which files to update. If set correctly, you can prevent those browsers from reloading files that rarely change, such as your logo. This is useful for websites with recurring visitors, who do not need to load the page from scratch every time.

Browsers will cache files with far-future expires headers and keep it cached, so the apparent load time is far shorter. And they’ll use ETags to more easily check if a file has changed.

Image Size

Page weight may not matter as much. But image size is still a major drag on load times. Please, compress images.

Run Google PageSpeed Insights on your site. There is a section in this report that provides feedback regarding proper sizing, lazy loading, encoding images, and recommended image format.

Then What?

After that, things get a little more complicated. But not much. Any competent web developer or IT professional can get you set up with GZIP compression, for example, or troubleshoot slow database load times.

Bonus: check out Portent’s Development Architect Andy Schaff’s two-tiered ranking system of page speed optimizations to help you prioritize changes to make to your site.

Easy Wins, Big Advantage

If you’re ready to tackle site speed, we’ve got you covered. Learn the ins and outs of a faster site in our Ultimate Guide to Site Speed.

Other web speed tools:

PageSpeed Insights

BigQuery

Chrome User Experience Report

Measure

Compared to other digital marketing challenges, page speed is easy to address. And it has measurable results. But very few companies do the simple things that make a site fast. The good news is that you can gain a big competitive advantage if you just do the basics.

Ian,

Amen! I changed hosts, spend a bit more each month and had my developer speed up my site. Night and day difference, both the experience visiting my blog and the traffic spike I’ve experience since. People dig quick sites and rarely wait around for slow sites. Most of us are this way but are blind when we see our own blogs. I was for years 😉

RB

Hello Ian,

First, a technical tip: I see “Your comment is awaiting moderation.” for Ryan’s comment above which is, to say the least, weird.

Now – to the study.

I am confused: you say you analyzed data from 16 e-commerce sites but then examined 500 sites with Yslow. Then you present page value data. I don’t quite get how you measured page value for those 500 sites based on the 16 sites. Or maybe I’m not getting the setup at all?

Another question – what is the statistical significance and the power for the difference between the <=1 second bucket and the 1-2 second bucket? You have not published any sample sizes so I can't calculate those.

Also – have you checked for compounding variables between the buckets? Like maybe the niche of the site plays a significant role or some other unaccounted but important segmentation line?

Thanks,

Georgi

Hi Georgi-

The 500-site study was a separate review. The 16-site study included conversion data and is what we used to show e-commerce impact. The 500-site study was performed to show the most common problems. I’m going to add that to the post.

As far as niche and other potential compounding variables: There’s nothing obvious, but it is why I mention wanting to do this study again with many more sites. That requires access to data from many companies, though, and it’s unlikely most will cooperate.

Thanks Ian! I don’t blame you for pushing site speed so much. We do the same thing. If nothing else, it is the one thing that we work on during our time with a client. Keep up all the solid work my friend!

Hi Ian,

great post.

I am 100% with you on this and spend way too much time in my peers opinion’s focusing on hosting reliability and speed. I am the only person I know that really truly loves DNS.

I also think that people in search should care a lot more about their clients web speed and in my opinion the best way to monitor that is using RUM real user measurement which you can actually use on very low traffic sites for free via Pingdom.

That way you can fix issues that I simply using a tool to collect the speed from one data center (obviously there is huge value those tools as well in the beginning) but I do not understand why everyone in search does not bring over their customers to a specialized hosting environment for their site.

Along with using RUM data to make sure that the clients customers are really happy. No matter where they are.

We do not allow clients to stay on Go Daddy and pay for the correct listing for their site ourselves because it is so important. We also think it is important for SEO’s to have the ability to work with the hosting provider as well as the client maintaining ownership of their account if they wish to & always give the client a daily backup of their website regardless.

It has gotten to the point where we now have a complete set up that is redundant and really fast after using literally over 60 hosting providers. I would be happy to share all my testing data with you if you like it?

I have narrowed it down to a handful of quality hosts that dependent on my clients needs I will pay for myself if the client has an issue with the bill.

I have also found that using the EdgeCast ADN (not a typo) application delivery network. Is an incredible tool.

By adding Google page speed to every site via the ADN than using DynECT DNS mixed with EdgeCasts new route DNS.

We can actually run and Nginx + varnish with a site that will benefit from Google page speed. As I am sure you know most have to choose between just using Nginx or Apache.

In order to use Google page speed.

I am really happy to see companies like Digital Ocean sprouting up because it gives people that do not have the budget the ability to run a much faster site than they could normally for five dollars. However while I endorse digital ocean for many uses rarely and I mean very rarely do I use them for my customers.

I think we have got to think about the hosting and coding and how it affects end-user right that is the name of the game.

End-users hate downtime, love sites that are really fast sites on mobile and desktop.

Simply by removing clients websites from hosting companies like Go Daddy and moving them onto our Firehost set up with Google page speed and varnish then of course adding the correct DNS DynECT or EdgeCast DNS including geo-IP ( DNS made easy is cheap and fast as well)

We have brought so many slow websites with a plus 10 seconds to an average RUM speed of under 2-3 seconds prior to making any code changes which is something that is just as important.

I agree with you it is in no way cheap to do this, but it is worth it every time.

I am really looking forward to more and more hosting companies becoming more aware and networks getting faster.

Sorry for the very long message,

Thomas

No problem re: the long message. What a great comment!

I agree: I think clients are also getting more savvy about this and seeking out faster providers. With virtualization, having your own dedicated box is easier.

Ian

I would love to read an article on how these issues may or may not conflict with having a more templated web site service, such as a wordpress site.

I’m curious what challenges one might have trying to speed up a wordpress site, and the pros and cons to having a wordpress site when it comes to page speed.

Hi Norm,

WordPress sites are just like any other. They can be fast or slow. I do find WordPress a bit easier to optimize, simply because there are plugins that’ll do it for me, like W3TotalCache. Our site runs on WordPress.

Hope this helps,

Ian

Hi Ian,

I love the post. I too feel like I beat the same drum every day. I specialize in making Magento eCommerce sites faster by providing my customers with a very optimized hosting platform. I’ve written several case studies on site speed and I actively seek data to increase site speed and performance on a daily basis. I guess you could call me a speed geek. I don’t understand why some site owners / developers do feel the same way about their site speed and how it affects their bottom line, yet they are hesitant about taking action to address their concern.

An interesting observation with the case studies I’ve performed:

1. Most developers / site owners realize that they can increase revenue by simply speeding up their page load times.

2. They run a series of speed tests through Google Insights or Webpagespeedtest and gather data.

3. They obtain detailed reports that show them how they can improve their page load times.

4. They focus their attention very heavily on coding / design while sometimes overlooking the performance of their server.

5. Those that understand their server plays a significant role in site speed start researching hosting platforms.

6. But they are hesitant to make a change with their hosting platform because they believe it is a monumental task, or they just don’t know who to trust.

I took all of these variables into consideration while searching for an easy solution to take away the pain, so I created a live Magento hosting comparison tool. I have given these concerned individuals the opportunity to compare their current Magento site to a live mirror copy of itself on an optimized platform for free, just to take the fear factor away and show them what can happen when they get a tailored hosting plan that complements their specific site.

What I don’t understand is that people are still hesitant to try the free test, but those who do, get rewarded with the benefit of having a faster site. Ultimately, that means they make more money.

Great points, Tom. I run into this all the time, with all changes: Hosting, database configuration, image compression, etc. But, that just means a bigger advantage for everyone else, I guess.

Great article Ian!

Harping on page processing speed is a common aspect of most of my audits as well.

In addition to the areas you covered, there’s 3rd party domain processing.

GoogleAdServices code embedded on a site to help track AdWords visit data is notorious – I’ve had clients deactivate that code alone to gain upwards of three seconds processing speed. Disqus is ridiculously flawed code. Social sharing live-count scripts, useless bell and whistle widgets, multiple ad-network conflicts…

Another common culprit is First Byte Time – issues with a site communicating with its own server or its data server…

So many ways to shave off precious seconds. And while I encourage clients to shoot for an ideal speed, I also let them know that (as you describe in the article), progress is often enough to make serious gains.

They typically need to balance limited resources in the implementation phase so I don’t demand that they achieve the ideal speeds (at least in the initial phase after an audit)…

All excellent points. Javascript drives me nuts, when you could just scoot it to the bottom of the code and reduce load time by quite a bit.

Site speed is something that I definitely feel is overlooked by a lot of sites.

One service that I feel isn’t mentioned enough for this is Cloudflare. Especially for smaller sites it offers a free solution that in addition to caching your resources on their servers for faster response times will also autominify your css, ensure that your javascript files are load asynchronously and I believe a few other things.

I managed to get my company’s site from 4 seconds to ~1.3 seconds using mostly just cloudflare, some image optimization and setting expires headers in my .htaccess file.

Interesting article. I actually had the chance to spend some time playing around with Google’s mod_pagespeed over the weekend to automatically perform a lot of the same optimisations you covered in this article.

I wasn’t entirely sure what to expect since the site was already well optimised and fairly lean but I ended up making massive gains in less than 10 mins of work. I ended up writing about how I did it in case you or anyone else is interested. http://bit.ly/1judmk0

Hi Ian,

I too am a big fan of fast loading pages.

To demonstrate, our website loads the home page in 466ms, that is from server with the caches empty. Of course this depends upon the network speed.

I mention the time, not to show off, but to show that it is easily possible, if and this is really important that the whole idea of reducing load time is considered from the beginning of the process.

Once the low hanging fruit have been taken care of, such as the amount of data transfer, as identified in your article, it is down to efficient use of server resources:

* Server side caching of code

* Database connection time

* Database access time

Etc. This is where the large systems fail, in my experience, the back end is designed for flexibility but at the cost of performance.

Like so much in life, there isn’t one simple answer, there are several answers to different bits of the puzzle.

Wonderful article Ian!

I must agree with Spencer about the CloudFlare as well. It basically takes very little time to configure and we gained about 30% increase in revenues on our demo sites when I took a week and went over our server configuration, installed W3 total cache, mod_pagespeed, optimized images etc.

We are selling WP themes and I am just now running Google Experiment a/b testing how the page load speed is performing the sales. I will write a blogpost about this when the test is finished on my blog.

I wish I could say “tough poo” on the blog I contribute to, oh well…

Excellent article. I found that the specs of the server hardware also have a very big influence on website speed. Find a more powerful solution and your website will speed up noticeably.

Nice post,

I love your website because of all type info available on your site and this post is very useful for me and all website maker friends and can read this post and stop to hacking and build a secure website.

Thank you.

Online reputation management Services does not just involve avoiding negative content or generating positive reviews. It is about increasing transparency and creating positive word of mouth publicity for your brand.

Hello! This is really fascinating information. “A site that loads in 1 second has an e-commerce conversion rate 2.5x higher than a site that loads in 5 seconds.” — Are these US-based websites/companies? Thanks!

Thanks for reading, Portia.

Not necessarily, no. While we work with many US-based companies, we also work with global brands that were part of this study.

I was really surprised with your stat about ‘sites that load in 1 second have an e-commerce conversion rate 2.5x higher than a site that loads in 5 seconds’. I know pagespeed helps with conversion rates but I didn’t think it would make that much of an impact. I guess with e-commerce vs lead gen is that people tend to move around the site a lot, looking at different products, so pagespeed may be more critical.

Curious to know if these numbers are referring to FCP, LCP or what? Are we talking a fully-loaded site speed?

I’m interested in what metrics did you test to measure page load speed (FLT, FCP, LCP?). Which test did you use for it?

The graph only says seconds, but for what?

The other thing “A 4 second page load time at a 2.93% conversion rate results in $335”, the percentage number here is incorrect.