Updated on January 26, 2021, to include additional tips.

I don’t think I’ll ever stop making mistakes in Google Tag Manager (GTM), which means I’ll continuously improve my tracking abilities from the lessons I’ll learn. Thankfully, there are multiple tools we can test through before publishing to the site. The ability to do so has honestly made me a little too fearless at how creative I get with my tracking configurations.

Although not comprehensive, this post is meant to guide you through some common mistakes to check through when troubleshooting your GTM configurations. I’ll also provide some tips to allow you to use GTM more efficiently.

Tools for Testing Google Tag Manager Tags

First, I’ll briefly touch on a few tools I use to test tags in Google Tag Manager. I typically use a combination of a few, depending on what I’m testing. I’ll also share a few tips for some advanced tracking across each of these tools.

Besides GTM Preview Mode, there are a few other tools that you should use in tandem to have better visibility into how hits get parsed. Having the debugger open is most useful for identifying the exact values, events, and registered criteria. For example, it can tell you whether a form submission event gets sent, the exact click ID, the dataLayer values pushed, etc. However, it doesn’t necessarily show you how data will be consumed by the end platforms at a granular level (especially outside of Google Analytics).

Remember to take advantage of other extensions as well to see how hits register in their respective platforms, such as the Facebook Pixel Helper, Twitter Pixel Helper, and the Google Analytics Debugger (which is especially useful for viewing detailed eCommerce hits in the console).

Validate Hits in the DOM

The Document Object Model (DOM) has plenty of useful reports to use while testing: checking cookie values in the Application panel, identifying element attributes in the Elements panel, returning dataLayer values, and discovering errors in the console testing tags before publishing in the Network panel. For this post, we’re only very briefly touching on the Network and Application panels.

The Network panel shows you what tags would fire with your current configuration in GTM, even before publishing. The difference is that those tags may not send 200 status codes. Enable debugging in GTM, right-click on the page, hit “Inspect,” and navigate to “Network” at the top of the DOM to view every hit sent out from the page. Once you’ve tested the action, you can enter “collect” into the search bar to filter for GA hits or a part of the pixel that you’re testing (for example, “Linkedin”) to see what hits were sent.

Successful hits return 200 status codes. You can see that the hit in the screenshot above fired from configuring our tag in GTM (302 status code) but did not actually send to the platform, which would send a 200 status code.

Need to test an action that’s dependent on cookie tracking? You can clear your cookies in DOM’s Application panel to treat your session as the start of a new session or even as a new user. The most common reason I’ve used this is to track pop-ups that only appear for first-time users or at the beginning of a session.

Test at a Session-Level with Google Tag Assistant

Google Tag Assistant shows you hit details for published tags—which provides insights into hits and how sessions are registered across GA views. It offers a cleaner view of parsed hit data across an entire session or even across multiple sessions. Be sure to enable tracking across tabs and use the recording function to get the best use out of this tool.

Google Tag Assistant is most useful to test the following:

- Cross-domain tracking

- Custom UTM parameters

- Goal configurations

- Consistent values across a session (ex. user IDs and client IDs)

Common Google Tag Manager Tracking Mistakes

Generally, I recommend just testing and iterating:

- Gather the necessary information.

- Set up testing tags.

- Try it out multiple times, across multiple scenarios, and over multiple days.

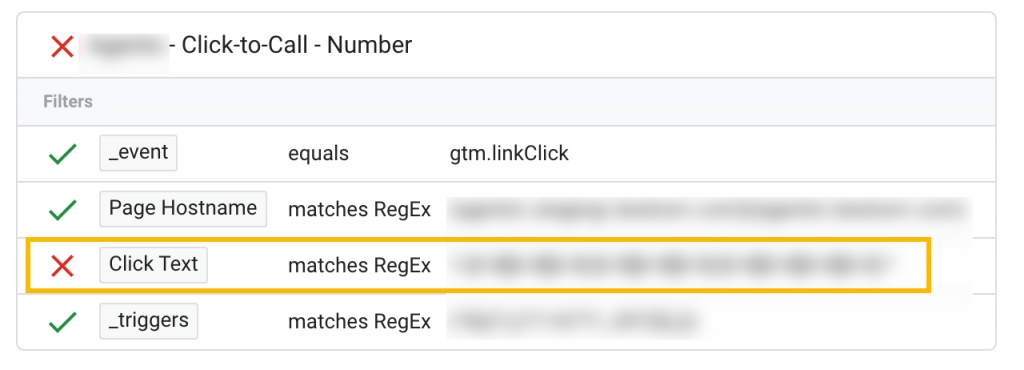

If you’ve tested your tag and failed to see it fire, use GTM Preview Mode for one of its most useful features—trigger criteria validation:

Click on the event on which the tag should have fired, find and open the tag under the “tags not fired” section, and identify what prevented your trigger from firing your tag.

The following suggestions are common issues to check for, most of which may not be as easily identifiable by validating your triggers.

Testing With the Wrong URL

The simplest oversight, yet one that occurs all too often: testing on a staging URL with a tag pointed at the production URL. If you need to limit your trigger to only fire on a specific hostname, add that criteria to the trigger after you’ve validated every other part of the trigger. You could even include the staging and production hostnames in the trigger through regex if you’re using a lookup variable to send the data to their respective properties.

Also, there’s a new option that’s automatically enabled to append a debug signal onto the URL in the most recent update of GTM’s Preview Mode. This appended parameter is registered in the page URL during testing, which can interfere when testing specific page URLs or page paths. You’re offered the option to uncheck the box when you enter debug mode. However, it’s meant to make your debug session more reliable.

Tags Are Firing Inconsistently

Tags that fire inconsistently is an issue that can cause a significant gap in data collection. One of the first biggest mistakes I’ve made—and learned all too late—was not setting up triggers for every possible instance of the same action.

If you’re tracking a click-to-call action, you could track clicks on three separate elements outlined below that would all allow the user to take this action.

You could use click text on the phone number (purple highlight), click ID on the icon (blue highlight), and click on the entire element (green highlight).

When you’re attempting to track a specific action, scan across the site for every opportunity for that action, click around those elements, and don’t forget to test it across mobile and desktop. (You can use the DOM to view and test the mobile version of your page.)

Hits Are Registering but Not Showing in the Preview Pane

Registered hits not showing in the preview pane is a unique issue that has caused so much turmoil that it’s quickly become something I check for even before I configure tags. Suppose you’ve identified the correct element ID, checked your tag configurations, ensured that the correct container ID exists, and still aren’t seeing your actions register or tags fire. In that case, odds are you’re working within an iframe.

I share the same sentiment of dread as most other analysts hold about iframes, but I just assume they could always be a cause of my tracking issues to mitigate them. Do you have a pop-up form? Check if it’s in an iframe. Do you have forms embedded through third parties? Scroll down the form to see if Preview Mode exists within the form. Do you have a pop-up chat functionality? It most likely lives in an iframe.

Unfortunately, most iframed content (especially through third-parties) cannot be tracked, but it’s always worth a shot to check. You can check if there’s an iframe by searching for “iframe” in the DOM or right-clicking around the element for an option to view the frame source if you need to assess the feasibility of a tracking request.

Hits Are Double-Firing

When hits are double-firing, it’s because you’ve configured a tag to do so, even if it was unintentional. The most common cause of this has been when I’m trying to avoid under-counting by setting up multiple triggers to capture every possible instance of that action.

Let’s use the click-to-call example from above. If you set up a click on that icon using an “all elements” trigger and a click on the click text of the number using “link clicks,” there’s a possibility that it would fire once during each of the following events:

Test tags that have multiple triggers to ensure your tag only fires the desired number of times. In this case, we could just remove one of those triggers because both events fire on the same action. Another solution could be to limit the number of times your tag can fire on the page in your tag settings.

Tips for Efficient Tracking in GTM

Regardless of your tracking task’s complexity, organization, and attention to detail will help you collect the right data. The following are a part of a tracking process that has been an accumulation of making multiple mistakes (repeatedly) over the years.

Keeping GTM Clean

- Keep a consistent naming structure for your variables, triggers, and tags (ex. “[Platform] – [Type] – [Action]” or “dlv – [variable name]”).

- If you have multiple people making changes, keep your changes in separate workspaces.

- Conduct a “GTM Audit” once in a while and remove unnecessary items, so there aren’t multiples of the same variables, triggers, and tags.

Tips for Publishing

- Although I shouldn’t have to say this, I will: do not publish anything on a Friday or right before you’re about to become unavailable.

- Don’t ever underestimate the gravity of the impact one “little” change can make to your data.

- Name your versions when you publish, so you have a general idea of what was changed without having to click through to each version.

- Only assign publishing rights to a few users who will be accountable for the final review.

Monitoring Your Data

- Share a channel or even an email thread with all stakeholders to notify them when major changes have been published—the more eyes, the better.

- Set reminders to check the data that has flowed through to the site after a few hours or the next day.

- If your GA data is extremely sensitive, consider setting up a test property to send the data to first before muddying up your primary data source.

- Set up GA alerts or create a custom GA report or GDS dashboard to monitor changes to the data your tags are collecting easily.

- Work closely with your dev team to make sure everyone is aware of what changes will impact tracking.

Final Thoughts

Although it has taken many accounts of trial and error, it has helped me understand how to better collect higher quality data. As I’ve stated, I’ll continue to expand this tracking process through learning from my mistakes. I hope that my experiences show you how important it is to be cautious and set up your safeguards. Once you’ve done so, you’ll have more space to be open to learning from your mishaps as well.

i also made GA ID mistake.this article helps me how to get better use for GTM. thanks a lot!

Thanks for the share.

I always make the mistake to not annotate the versions of my tags.

Like i know what i did, but when i will come back in a year it will be better to have some infos to remember. 🙂